The most beautiful thing you can experience is mysterious - Einstein

One area that caught my attention was Compute driven scientific discovery. So i have been trying to understand from first principles what role computing/AI will play in scientific discovery in coming years. Starting from a blank canvas, i thought it ’d be best to write down some stuff and possibly see if a picture emerges and if we can also find some common ground for projects and develop a vision statement for atreides research lab. Here are some long, random and incomplete thoughts starting from very basics:

THE SCIENTIFIC method was perhaps the single most important development in modern history. It established a way to validate truth at a time when misinformation was the norm, allowing natural philosophers to navigate the unknown. From predicting the motions of the planets to discovering the principles of electricity, scientists have honed the ability to distil facts about the universe by generating theories, then using experimentation to qualify those theories. Looking at how far civilisation has come since the Enlightenment, one can’t help being awestruck by all that humanity has achieved using this approach. I believe artificial intelligence (AI) could usher in a new renaissance of discovery, acting as a multiplier for human ingenuity, opening up entirely new areas of inquiry and spurring humanity to realise its full potential. The promise of AI is that it could serve as an extension of our minds and become a meta-solution. In the same way that the telescope revealed the planetary dynamics that inspired new physics, insights from AI could help scientists solve some of the complex challenges facing society today—from superbugs to climate change to inequality. My hope is to build smarter tools that expand humans’ capacity to identify the root causes and potential solutions to core scientific problems. Demis Hassabis on AI's potential

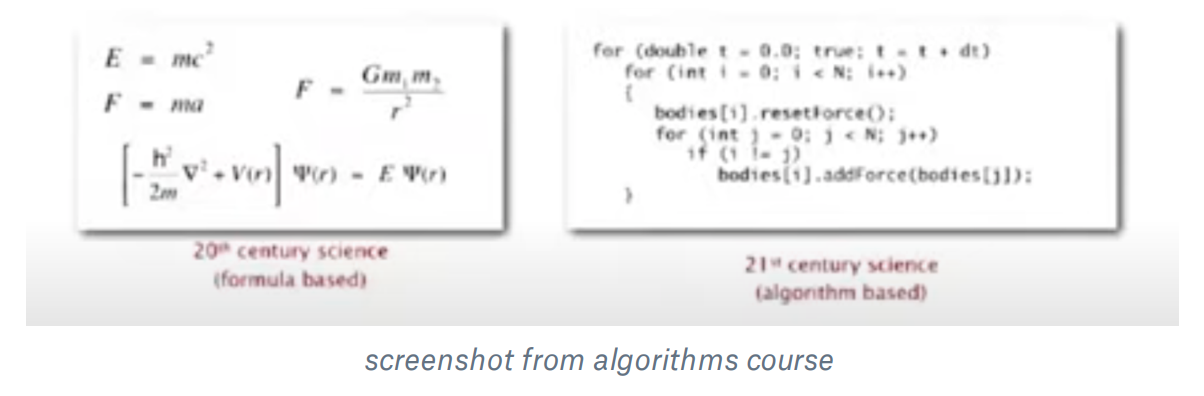

Folks with a CS background are trained in algorithmic thinking where we learn to thinking about solving problems using computation. Algorithms are the fundamental unit of programming and computer science. But increasingly in terms of applications they go beyond software development as well. Avi Higderson says “Algorithms are a common language for nature, human, and computer.” Even though the field of CS is new and increasingly we are starting to realise that algorithmic thinking is a universal framework that can be applied to hard sciences as well. Robert Sedgewick who teaches algorithms at Princeton says computational models are replacing math models in scientific inquiry/discovery:

This means algorithms are a common language for understanding nature where we can simulate the given phenomena in order to better understand it. This attitude however, does have its limitations:

Data centric computing and Data Science computer science has evolved as a discipline. Its interesting to see how MIT’s intro to computation course has changed its structure overtime with emphasis on technical topics for Data analysis as well. AI Software is replacing traditional software Some dub the phenomena as software 2.0. I like the term data-centric computing for explaining this phenomena.

Data science is the process of formulating a quantitative question that can be answered with data, collecting and cleaning the data, analyzing the data, and communicating the answer to the question to a relevant audience. If we do want a concise definition, the following seems to be reasonable: Data science is the application of computational and statistical techniques to address or gain insight into some problem in the real world.

The key phrases of importance here are “computational” (data science typically involves some sort of algorithmic methods written in code), “statistical” (statistical inference lets us build the predictions that we make), and “real world” (we are talking about deriving insight not into some artificial process, but into some “truth” in the real world).

Another way of looking at it, in some sense, is that data science is simply the union of the various techniques that are required to accomplish the above. That is, something like:

Data science = statistics + data collection + data preprocessing + machine learning + visualisation + business insights + scientific hypotheses + big data + (etc)

This definition is also useful, mainly because it emphasizes that all these areas are crucial to obtaining the goals of data science. In fact, in some sense data science is best defined in terms of what it is not, namely, that is it not (just) any one of these subjects above.

Data centric = data science + data structures

Data science = statistics + data collection + data preprocessing + machine learning + visualisation + business insights + scientific hypotheses + big data + (etc)

Data centric computing is characterised by programs interrogate data: that is, programs are tools for answering questions. read more

AI as an enabler of scientific discovery - Case Studies

In the mid-twentieth century, Margaret Oakley Dayhoff pioneered the analysis of protein sequencing data, a forerunner of genome sequencing, leading early research that used computers to analyse patterns in the sequences. The expression ‘artificial intelligence’ today is therefore an umbrella term. It refers to a suite of technologies that can perform complex tasks when acting in conditions of uncertainty, including visual perception, speech recognition, natural language processing, reasoning, learning from data, and a range of optimisation problems.

Using genomic data to predict protein structures: Understanding a protein’s shape is key to understanding the role it plays in the body. By predicting these shapes, scientists can identify proteins that play a role in diseases, improving diagnosis and helping develop new treatments. The process of determining protein structures is both technically difficult and labour-intensive, yielding approximately 100,000 known structures to date5. While advances in genetics in recent decades have provided rich datasets of DNA sequences, determining the shape of a protein from its corresponding genetic sequence – the protein-folding challenge – is a complex task. To help understand this process, researchers are developing machine learning approaches that can predict the threedimensional structure of proteins from DNA sequences. The AlphaFold project at DeepMind, for example, has created a deep neural network that predicts the distances between pairs of amino acids and the angles between their bonds, and in so doing produces a highly-accurate prediction of an overall protein structure.

Understanding complex organic chemistry The goal of this pilot project between the John Innes Centre and The Alan Turing Institute is to investigate possibilities for machine learning in modelling and predicting the process of triterpene biosynthesis in plants. Triterpenes are complex molecules which form a large and important class of plant natural products, with diverse commercial applications across the health, agriculture and industrial sectors. The triterpenes are all synthesized from a single common substrate which can then be further modified by tailoring enzymes to give over 20,000 structurally diverse triterpenes. Recent machine learning models have shown promise at predicting the outcomes of organic chemical reactions. Successful prediction based on sequence will require both a deep understanding of the biosynthetic pathways that produce triterpenes, as well as novel machine learning methodology

• Finding patterns in astronomical data: Driving scientific discovery from particle physics experiments and large scale astronomical data • Understanding the effects of climate change on cities and regions: Satellite imaging to support conservation

The ‘traditional’ way to apply data science methods is to start from a large data set, and then apply machine learning methods to try to discover patterns that are hidden in the data – without taking into account anything about where the data came from, or current knowledge of the system. But might it be possible to incorporate existing scientific knowledge (for example, in the form of a statistical ‘prior’) so that the discovery process is constrained, in order to produce results which respect what researchers already know about the system. For example, if trying to detect the 3D shape of a protein from image data, could chemical knowledge of how proteins fold be incorporated in the analysis, in order to guide the search? the goal of scientific discovery is to understand. Researchers want to know not just what the answer is but why. Are there ways of using AI algorithms that will provide such explanations? In what ways might AI-enabled analysis and hypothesis-led research sit alongside each other in future? How might people work with AI to solve scientific mysteries in the years to come? Is it possible that one day, computational methods will not only discover patterns and unusual events in data, but have enough domain knowledge built in that they can themselves make new scientific breakthroughs? Could they come up with new theories that revolutionise our understanding, and devise novel experiments to test them out? Could they even decide for themselves what the worthwhile scientific questions are? And worthwhile to whom?

Scientific Method is about building Error Correction Systems - ones that become better over time.